Wargaming: running incident response plan tests - part 1

Cyber exercising is important for checking how a team responds to an incident.

I've touched on the importance of testing business continuity plans before, but I've not yet discussed how testing your cyber security incident response is equally important. Indeed, an incident could lead you to be in business continuity or disaster recovery mode. In this post I'm going to talk about running incident response simulations (wargaming) and what you learn through the process.

What's a cyber security incident?

In the context of this blog post I'm thinking about an event caused either by a third party, malicious insider, or a member of staff by accident. For example, a malicious actor could infect your systems with ransomware and start encrypting your files. Once an incident has been detected it's important to contain it to limit the damage.

You've got a plan, right?

The most effective way to respond to an incident is to follow a plan you've already got (sometimes these can be referred to as play books). By having an existing, documented, plan your responders don't have to think what to do next - they just focus on dealing with the problem at hand. Importantly, the plan needs to be available during the incident (paper copies can be helpful) and should be understandable to your technical team, even those that perhaps wouldn't normally get involved. Staff absence can mean extra people get pulled in at short notice.

It won't surprise me if your organisation doesn't have a plan. Back when I was in charge of a school network I didn't have one, and fortunately nothing ever took hold of the network. Over the last nine years, cyber security has become a bigger focus for organisations (and my career) and people are starting to think about problematic scenarios. Having the plan written down is the next step.

Schrödinger's plan

Those working with backups may have come across the concept of Schrödinger's backup, coined after Erwin Schrödinger's cat based thought experiment. In his experiment the cat is considered simultaneously alive and dead - it's unknown which is the case until the cat is observed. I digress a bit, but Schrödinger's backup says:

The condition of any backup is unknown until a restore is attempted.

In much the same way, the effectiveness of your incident response plan is unknown until you have to use it. By testing your plan you can identify what worked well, and importantly what needs changing, before you have an incident. As a result of the rehearsal staff are better placed to react to a real incident which, hopefully, reduces the overall impact on the organisation.

Testing methods

There's a few methods available here, ranging from table top exercises (the scenario is described, as are people's chosen actions) to walk through (like a table top, but literally walking to where each action would take place) to simulations, which I'll cover in more depth in this series. As the name implies, a simulation involves performing actions in response to a mock attack - something happening in real time. It's important to remember that a simulation is as close to a "live fire" exercise as you're likely to get (I'm not going to unleash real ransomware on the network - that would be a career ender!) and thus actions could have real consequences.

Ideally the simulation should be conducted in a test environment that closely resembles your real environment - a similar number of devices, the same operating systems and tools. In my case I didn't have a spare training environment comprised of hundreds of servers, or the network traffic of hundreds of people, so I arranged my simulation on the production network. You may think that sounds risky (it is), so let's cover reducing that risk...

Running tests inside production

Using the production environment means that any logs the incident responders look at will be real. Similarly, any firewall traffic analysis will be real. This is fantastic, as the team will be working under almost identical conditions to a real incident (only the payload is different) but is obviously not ideal because any action they take ("hey, let's switch off the Internet connection!") will have a direct impact on everyone else. We want to avoid our testing causing a real world outage!

To mitigate this I explain during the kick off meeting that they will be working in the production environment, so I want them to tell me before any changes are made. Follwoing that rule means there won't be any problems because, as the facilitator, I can say "don't do that". Obviously I won't be running a test that requires an outage as the win condition - that would be silly.

I also look to reduce the risk further by running my "infected device" on a piece of hardware that could be safely taken offline. A spare laptop is great for this, especially if it's hidden in another part of the building. As I'm writing this during the pandemic, with many of our offices shut, I used a virtual machine on our test infrastructure instead.

Choosing a simulation tool

For the simulation to be useful it needs to generate a signature similar to that which would be seen during a real attack. We're going to need a tool that can generate traffic that we can detect in logs and I want to give the UK's National Cyber Security Centre (NCSC) a shout out here. The NCSC provides Exercise in a Box (EiaB) which contains various exercises you can run through. At the time of writing there's only one simulation exercise but there are also table top and short "coffee break" exercises too. What I like about the NCSC offering is the fact that everything is pretty much given to you in "the box" - facilitator notes, injects (more on those in part two), a tool to generate traffic and questions to evaluate the exercise afterwards.

Other tools exist, and I invite you to search using your favourite search engine. I would, however, caution you to make sure whatever you run comes from a reputable source. You do not want to run something that actually does infect your network!!

Preparing for the exercise

Once you've chosen your tool you need to determine who should be present for the session. As a general rule you'll want someone at management level that can approve decisions (or someone to act in that role), technical staff to search for the problem, and someone to handle communications with other areas of the business. Other roles can also be useful (e.g. note taker, help desk staff) and it's entirely up to you how many people you involve, or if some people perform multiple roles. You want to make sure people aren't sat around doing nothing - it's important everyone benefits from the simulation.

You will likely find that some of the people you invite are fearful that this is a test of them and that there'll be some negative impact to their job if something goes wrong. Allaying this fear is really important, so highlight to people that the aim is to test the process, not the people. There's also a benefit that training needs can be identified and advise that you'll look to arranging training if that's the case[1].

Having arranged a date and time for everyone to turn up (in person or virtually) I'd highly recommend performing a dry run on your own. Check that the tool actually works (in my case that it actually connects out to a remote server) and that the documentation you'll share makes sense. If you've ever done a live demo, and had it go wrong, you'll know the sort of pressure and "egg on your face" moment that you're looking to avoid here!

In part two of this series I'll cover actually running the simulation and running the exercise.

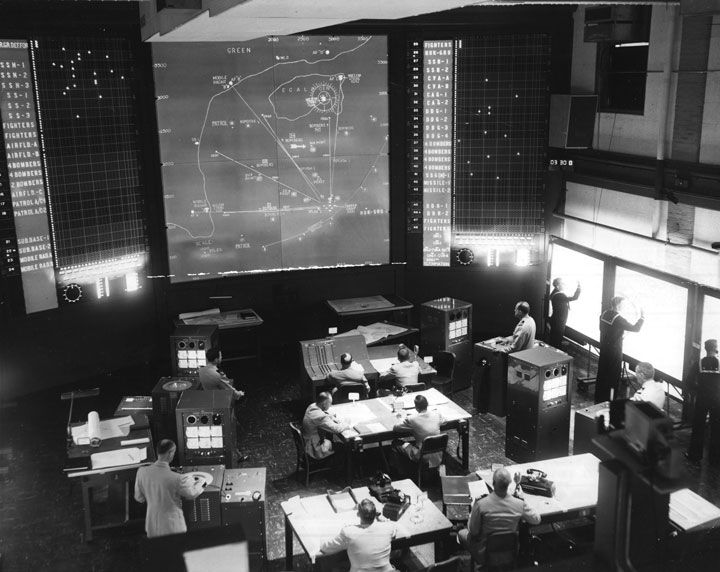

Banner image: A wargame being conducted at the US Naval War College in 1958. From Wikimedia Commons, public domain.

[1] Training should be a continual event for anyone working in IT and / or cyber security. If your organisation doesn't make time for training, or provide training resources to employees for use in work time I'd strongly recommend you do so.